In clinic, the friction point is rarely curiosity about AI; it’s governance. A supervisee wants help rewriting a sensitive school report, summarizing an OT evaluation, or drafting a consent form in simpler Arabic, then asks the question we all recognize: “Can I paste the real text?” The ethical discomfort is that most chat systems are cloud-mediated by design, and our default answer becomes a risk-management lecture rather than a clinically useful pathway.

That’s why the claim, “Imagine ChatGPT, but installed directly on your device… private, offline, and free,” spreads so quickly. It sounds like the long-promised reconciliation of capability and confidentiality. But slogans are not safeguards, and “CEO energy” is not a clinical governance framework. Even when a tool comes from a major company, brand is not a substitute for evaluating workflows, auditability, and failure modes.

What this points to, more precisely, is the growing ecosystem of local-capable models, including Gemma 4, that can be downloaded and run in environments you control. The practical promise is simple: you ask questions, it drafts text, it helps structure documentation, and in some setups it can support image-related work, while computation can happen on your own device. That “where the model runs” detail is not cosmetic; it is the whole privacy story.

The “price” point matters for therapists because it changes adoption pressure and boundaries. If a model is “free” to download and run, the barrier shifts from subscription gatekeeping to hardware limits and setup time. You still “pay,” just differently: battery/heat, local storage, occasional troubleshooting, and the need for someone to own maintenance. But the psychological shift is important, capability feels close enough to use in real workflows, not only as a toy.

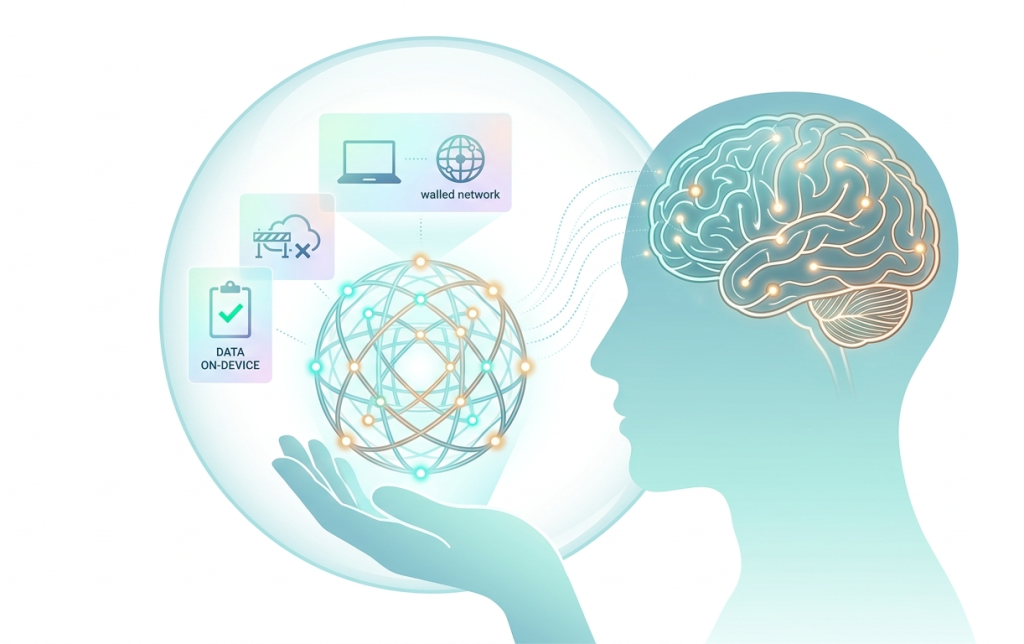

Here is where the comparison belongs, because it sits right inside that workflow decision: you are choosing not only an AI, but a data path. Gemma 4 is one local option, but not the only one; many people also run DeepSeek-style models locally, and others choose Llama, Mistral, or Qwen depending on hardware and licensing comfort. The short comparison is this: local models (Gemma/DeepSeek/Llama/Mistral/Qwen run on-device) can support stricter confidentiality by keeping text in-house, while cloud models (ChatGPT/Claude/Gemini-style) often deliver stronger convenience and scalability but require clearer rules because identifiable data may leave your device unless you have an enterprise-controlled setup.

That’s why the phrase “Google sees nothing” is directionally true only under a specific condition: you are actually running it locally. “Local” is not a vibe; it’s an implementation choice—offline runtime, no hidden uploads, and settings you can verify. If you test the model in a browser demo, a hosted notebook, or any web app, you’re no longer in “offline” territory, and you should treat it like any other cloud tool: fine for synthetic or de-identified material, not fine for identifiable documents unless policy explicitly allows it.

Clinically, the most defensible value proposition of local inference is not novelty; it is a narrower but meaningful shift in what can be done without exporting identifiable data. Drafting discharge summaries in a consistent format, creating parent-friendly psychoeducation, adapting worksheets across reading levels, or generating structured session-plan templates can reduce administrative load. If the model is truly running offline, these tasks can be done while keeping protected content on the device, closer to the practical spirit of confidentiality, even when policy language lags behind technology.

Evidence-based practice pushes a harder question: where does this help clinical reasoning rather than merely accelerate text production? The risk is that fluent output can masquerade as warranted inference, especially in formulations, risk narratives, or “professional-sounding” reports that feel authoritative because they read well. Used well, a local model supports the plumbing of care (formatting, translation drafts, checklists, reflective prompts), while the clinician retains responsibility for interpretation, differential thinking, and the therapeutic relationship.

The “no limits” claim also deserves a clinician’s skepticism. Local models are not capped by a subscription counter, but they are constrained by memory, thermals, battery, and model size trade-offs. More importantly, offline does not equal harmless: hallucinations, bias, and overconfidence persist, and sometimes become more insidious when the system feels safe because it is private.

Ethically, local AI concentrates accountability rather than dissolving it. If a clinician chooses to process identifiable material on-device, they also inherit responsibilities around device security, app telemetry/logging, model provenance, update hygiene, and documentation of use. Transparency is a workflow discipline: noting when AI assistance was used, what kind of inputs were provided, and how outputs were verified supports data integrity and defensible decision-making.

What is most clinically interesting here is not the bravado of “offline intelligence,” but the opening of a more nuanced design space. Small local models for privacy-sensitive drafting; larger systems for literature work under controlled governance; and hybrid approaches that treat AI as an assistant to clinical judgment rather than a proxy for it. The next wave of useful work, worthy of supervision projects and pragmatic trials, is testing whether local inference measurably reduces documentation burden and improves patient comprehension without quietly eroding standards of verification.